Overview

When DNS was not into existence, one had to download a Host file containing host names and their corresponding IP address. But with increase in number of hosts of internet, the size of host file also increased. This resulted in increased traffic on downloading this file. To solve this problem the DNS system was introduced.

Domain Name System helps to resolve the host name to an address. It uses a hierarchical naming scheme and distributed database of IP addresses and associated names

IP Address

IP address is a unique logical address assigned to a machine over the network. An IP address exhibits the following properties:

-

IP address is the unique address assigned to each host present on Internet.

-

IP address is 32 bits (4 bytes) long.

-

IP address consists of two components: network component and host component.

-

Each of the 4 bytes is represented by a number from 0 to 255, separated with dots. For example 137.170.4.124

IP address is 32-bit number while on the other hand domain names are easy to remember names. For example, when we enter an email address we always enter a symbolic string such as webmaster@tutorialspoint.com.

Uniform Resource Locator (URL)

Uniform Resource Locator (URL) refers to a web address which uniquely identifies a document over the internet.

This document can be a web page, image, audio, video or anything else present on the web.

For example, www.tutorialspoint.com/internet_technology/index.html is an URL to the index.html which is stored on tutorialspoint web server under internet_technology directory.

URL Types

There are two forms of URL as listed below:

-

Absolute URL

-

Relative URL

Absolute URL

Absolute URL is a complete address of a resource on the web. This completed address comprises of protocol used, server name, path name and file name.

For example http:// www.tutorialspoint.com / internet_technology /index.htm. where:

-

http is the protocol.

-

tutorialspoint.com is the server name.

- index.htm is the file name.

The protocol part tells the web browser how to handle the file. Similarly we have some other protocols also that can be used to create URL are:

-

FTP

-

https

-

Gopher

-

mailto

-

news

Relative URL

Relative URL is a partial address of a webpage. Unlike absolute URL, the protocol and server part are omitted from relative URL.

Relative URLs are used for internal links i.e. to create links to file that are part of same website as the WebPages on which you are placing the link.

For example, to link an image on tutorialspoint.com/internet_technology/internet_referemce_models, we can use the relative URL which can take the form like /internet_technologies/internet-osi_model.jpg.

Difference between Absolute and Relative URL

| Absolute URL | Relative URL |

|---|---|

| Used to link web pages on different websites | Used to link web pages within the same website. |

| Difficult to manage. | Easy to Manage |

| Changes when the server name or directory name changes | Remains same even of we change the server name or directory name. |

| Take time to access | Comparatively faster to access. |

Domain Name System Architecture

The Domain name system comprises of Domain Names, Domain Name Space, Name Server that have been described below:

Domain Names

Domain Name is a symbolic string associated with an IP address. There are several domain names available; some of them are generic such as com, edu, gov, net etc, while some country level domain names such as au, in, za, us etc.

The following table shows the Generic Top-Level Domain names:

| Domain Name | Meaning |

|---|---|

| Com | Commercial business |

| Edu | Education |

| Gov | U.S. government agency |

| Int | International entity |

| Mil | U.S. military |

| Net | Networking organization |

| Org | Non profit organization |

The following table shows the Country top-level domain names:

| Domain Name | Meaning |

|---|---|

| au | Australia |

| in | India |

| cl | Chile |

| fr | France |

| us | United States |

| za | South Africa |

| uk | United Kingdom |

| jp | Japan |

| es | Spain |

| de | Germany |

| ca | Canada |

| ee | Estonia |

| hk | Hong Kong |

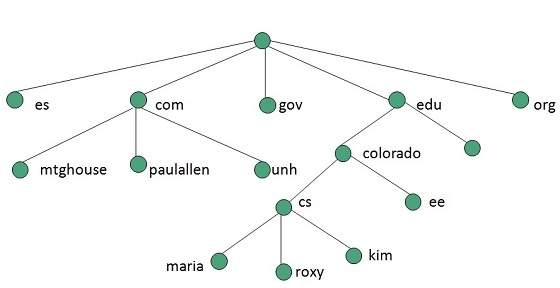

Domain Name Space

The domain name space refers a hierarchy in the internet naming structure. This hierarchy has multiple levels (from 0 to 127), with a root at the top. The following diagram shows the domain name space hierarchy:

In the above diagram each subtree represents a domain. Each domain can be partitioned into sub domains and these can be further partitioned and so on.

Name Server

Name server contains the DNS database. This database comprises of various names and their corresponding IP addresses. Since it is not possible for a single server to maintain entire DNS database, therefore, the information is distributed among many DNS servers.

Hierarchy of server is same as hierarchy of names.

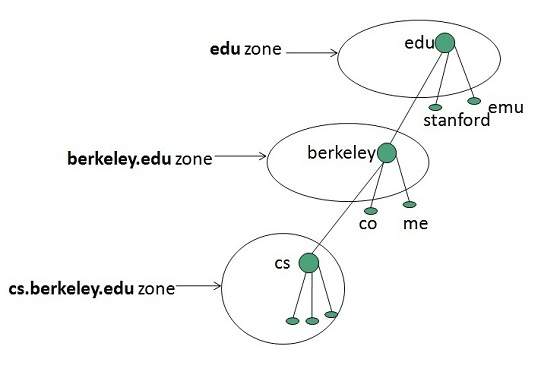

The entire name space is divided into the zones

Zones

Zone is collection of nodes (sub domains) under the main domain. The server maintains a database called zone file for every zone.

If the domain is not further divided into sub domains then domain and zone refers to the same thing.

The information about the nodes in the sub domain is stored in the servers at the lower levels however; the original server keeps reference to these lower levels of servers.

Types of Name Servers

Following are the three categories of Name Servers that manages the entire Domain Name System:

-

Root Server

-

Primary Server

-

Secondary Server

Root Server

Root Server is the top level server which consists of the entire DNS tree. It does not contain the information about domains but delegates the authority to the other server

Primary Servers

Primary Server stores a file about its zone. It has authority to create, maintain, and update the zone file.

Secondary Server

Secondary Server transfers complete information about a zone from another server which may be primary or secondary server. The secondary server does not have authority to create or update a zone file.

DNS Working

DNS translates the domain name into IP address automatically. Following steps will take you through the steps included in domain resolution process:

-

When we type www.tutorialspoint.com into the browser, it asks the local DNS Server for its IP address.

-

When the local DNS does not find the IP address of requested domain name, it forwards the request to the root DNS server and again enquires about IP address of it.

-

The root DNS server replies with delegation that I do not know the IP address of www.tutorialspoint.com but know the IP address of DNS Server.

-

The local DNS server then asks the com DNS Server the same question.

-

The com DNS Server replies the same that it does not know the IP address of www.tutorialspont.com but knows the address of tutorialspoint.com.

-

Then the local DNS asks the tutorialspoint.com DNS server the same question.

-

Then tutorialspoint.com DNS server replies with IP address of www.tutorialspoint.com.

-

Now, the local DNS sends the IP address of www.tutorialspoint.com to the computer that sends the request.

Here the local DNS is at ISP end.